Explore our expert-made templates & start with the right one for you.

Table of contents

Executive Summary – Pros, Cons, and Alternatives to Apache Airflow

- Open source Apache Airflow fills an important gap in the big data ecosystem by providing a way to define, schedule, visualize, and monitor the underlying jobs needed to operate a big data pipeline.

- Airflow was built for batch data, not streaming data. It also requires coding skills, is brittle, and creates technical debt.

- Upsolver SQLake uses a declarative approach to pipelines and automates workflow orchestration so you can forgo the complexity of Airflow and still deliver reliable pipelines on batch and streaming data at scale.

About Apache Airflow

Apache Airflow is a powerful and widely-used open-source workflow management system (WMS) designed to programmatically author, schedule, orchestrate, and monitor data pipelines and workflows. Airflow enables you to manage your data pipelines by authoring workflows as Directed Acyclic Graphs (DAGs) of tasks. There’s no concept of data input or output – just flow. You manage task scheduling as code, and can visualize your data pipelines’ dependencies, progress, logs, code, trigger tasks, and success status.

Airflow was developed by Airbnb to author, schedule, and monitor the company’s complex workflows. Airbnb open-sourced Airflow early on, and it became a Top-Level Apache Software Foundation project in early 2019.

Written in Python, Airflow has become popular, especially among developers, due to its focus on configuration as code. Airflow’s proponents consider it to be distributed, scalable, flexible, and well-suited to handle the orchestration of complex business logic.

Apache Airflow: Orchestration via DAGs

Airflow enables you to:

- orchestrate data pipelines over object stores and data warehouses

- run workflows that are not data-related

- create and manage scripted data pipelines as code (Python)

Airflow organizes your workflows into DAGs composed of tasks. A scheduler executes tasks on a set of workers according to any dependencies you specify – for example, to wait for a Spark job to complete and then forward the output to a target. You add tasks or dependencies programmatically, with simple parallelization that’s enabled automatically by the executor.

The Airflow UI enables you to visualize pipelines running in production, monitor progress, and troubleshoot issues when needed. Airflow’s visual DAGs also provide data lineage, which facilitates debugging of data flows and aids in auditing and data governance. And you have several options for deployment, including self-service/open source or as a managed service.

Advantages of Apache Airflow: Ideal Use Cases

Prior to the emergence of Airflow, common workflow or job schedulers managed Hadoop jobs and generally required multiple configuration files and file system trees to create DAGs (examples include Azkaban and Apache Oozie).

But in Airflow it could take just one Python file to create a DAG. And because Airflow can connect to a variety of data sources – APIs, databases, data warehouses, and so on – it provides greater architectural flexibility.

Airflow is most suitable:

- when you must automatically organize, execute, and monitor data flow

- when your data pipelines change slowly (days or weeks – not hours or minutes), are related to a specific time interval, or are pre-scheduled

- for ETL pipelines that extract batch data from multiple sources and run Spark jobs or other data transformations

Other applicable use cases include:

- machine learning model training, such as triggering a SageMaker job

- automated generation of reports

- backups and other DevOps tasks, such as submitting a Spark job and storing the resulting data on a Hadoop cluster

But as with most applications, Airflow is not a panacea, and is not appropriate for every use case. There are also certain technical considerations even for ideal use cases.

Disadvantages of Airflows: Managing Workflows with Airflow can be Painful

First and foremost, Airflow orchestrates batch workflows. It is not a streaming data solution. The scheduling process is fundamentally different between batch and streaming:

- batch jobs (and Airflow) rely on time-based scheduling

- streaming pipelines use event-based scheduling

Airflow doesn’t manage event-based jobs. It operates strictly in the context of batch processes: a series of finite tasks with clearly-defined start and end tasks, to run at certain intervals or trigger-based sensors. Batch jobs are finite. You create the pipeline and run the job. But streaming jobs are (potentially) infinite, endless; you create your pipelines and then they run constantly, reading events as they emanate from the source. It’s impractical to spin up an Airflow pipeline at set intervals, indefinitely.

Pipeline versioning is another consideration. Storing metadata changes about workflows helps analyze what has changed over time. But Airflow does not offer versioning for pipelines, making it challenging to track the version history of your workflows, diagnose issues that occur due to changes, and roll back pipelines.

Also, while Airflow’s scripted “pipeline as code” is quite powerful, it requires experienced Python developers to get the most out of it. Python expertise is needed to:

- create the jobs in the DAG

- stitch the jobs together in sequence

- program other necessary data pipeline activities to ensure production-ready performance

- debug and troubleshoot

As a result, Airflow is out of reach for non-developers such as SQL-savvy analysts; they lack the technical knowledge to access and manipulate the raw data. This is true even for managed Airflow services such as AWS Managed Workflows on Apache Airflow or Astronomer.

Also, when you script a pipeline in Airflow you’re basically hand-coding what’s called in the database world an Optimizer. Databases include Optimizers as a key part of their value. Big data systems don’t have Optimizers; you must build them yourself, which is why Airflow exists.

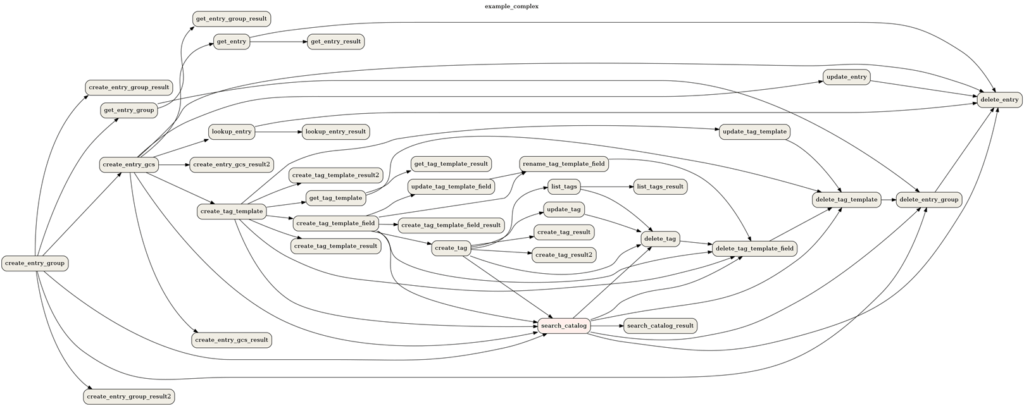

Further, Airflow DAGs are brittle. Big data pipelines are complex. There are many dependencies, many steps in the process, each step is disconnected from the other steps, and there are different types of data you can feed into that pipeline. A change somewhere can break your Optimizer code. And when something breaks it can be burdensome to isolate and repair. For example, imagine being new to the DevOps team, when you’re asked to isolate and repair a broken pipeline somewhere in this workflow:

Source: Apache Foundation

Finally, a quick Internet search reveals other potential concerns:

- steep learning curve

- operators execute code in addition to orchestrating workflow, further complicating debugging

- many components to maintain along with Airflow (cluster formation, state management, and so on)

- difficulty sharing data from one task to the next

It’s fair to ask whether any of the issues above matter, since you must orchestrate your pipelines no matter what. And Airflow is a significant improvement over previous methods. So is it simply a necessary evil? Well, not really – you can abstract away orchestration in the same way a database would handle it “under the hood” via an Optimizer.

Eliminating Complex Orchestration with the Declarative Pipelines of Upsolver SQLake

Airflow requires scripted (or imperative) programming; you must decide on and indicate the “how” in addition to just the “what” to process. But in a declarative data pipeline, you specify (or declare) your desired output, and leave it to the underlying system to determine how to structure and execute the job to deliver this output.

Upsolver SQLake is a declarative data pipeline platform for streaming and batch data. SQLake users can easily develop, test, and deploy pipelines that extract, transform, and load data in the data lake and data warehouse in minutes instead of weeks.

But there’s another reason, beyond speed and simplicity, that data practitioners might prefer declarative pipelines: orchestration covers more than just moving data. It also describes workflow for data transformation and table management. Airflow requires manual work in Spark Streaming, or Apache Flink or Storm, for the transformation code.

But SQLake’s declarative pipelines handle the entire orchestration process, inferring the workflow from the declarative pipeline definition. They automate tasks including job orchestration and scheduling, data retention, and the scaling of compute resources. They also automate the management and optimization of output tables, including:

- various partitioning strategies

- conversion to column-based format

- optimizing file sizes (compaction)

- upserts (updates and deletes)

Further, SQLake ETLs are automatically orchestrated regardless of whether you run them continuously or on specific time frames. There’s never a need to write any orchestration code in Apache Spark or Airflow. This is how, in most instances, SQLake basically makes Airflow redundant, including orchestrating and managing complex pipeline workflows at scale for a range of use cases, such as machine learning.

And since SQL is SQLake’s configuration language for declarative pipelines, anyone familiar with SQL can create and orchestrate their own workflows.

Try SQLake for Free

SQLake is Upsolver’s newest offering. It lets you build and run reliable data pipelines on streaming and batch data via an all-SQL experience. Try it for free, using either sample data or your own data. No credit card required.