Explore our expert-made templates & start with the right one for you.

Table of contents

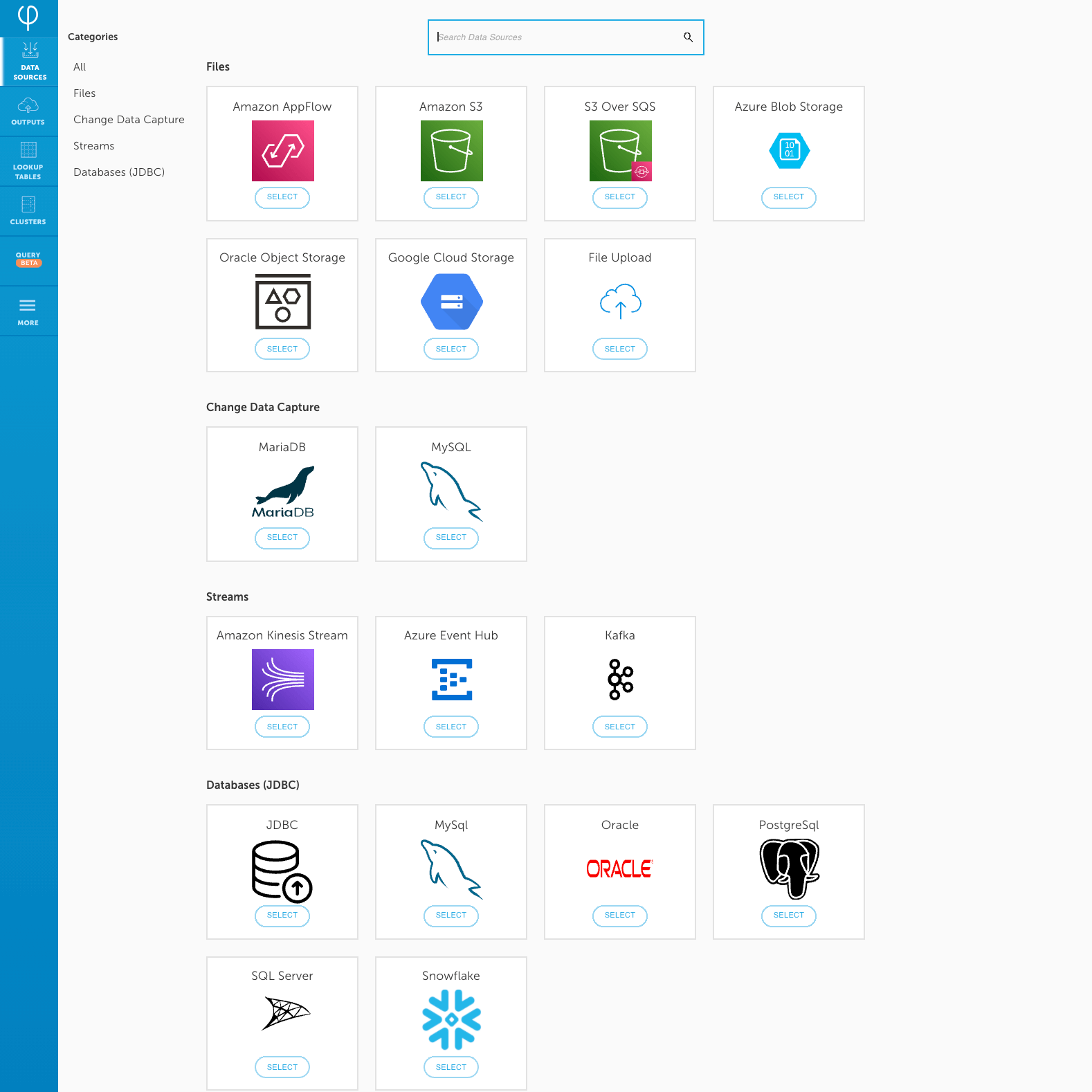

Click-to-connect data sources

Upsolver makes it easy to configure data ingestion through a visual interface. Simply select native connectors to event streams, file systems on cloud object storage, and databases via log-based change data capture (CDC) which transmits database changes as an event stream.

Regardless of the source, Upsolver continuously stores a raw copy of the data in Avro format for lineage and historical replay, while generating and outputting consumption-ready tables based on your desired transformations (see below). It also integrates natively with Hive metadata stores such as AWS Glue Data Catalog and Azure Data Catalog.

Data source connections can also be established using the Upsolver Rest API.

Streaming sources

For streaming data sources, Upsolver provides exactly once ingestion via connectors for Kafka, Amazon Kinesis and Azure Event Hub. Simply select the host, topic and format to connect the source.

Large jobs or workloads with high variability are not an issue, as Upsolver auto-scales processing power to support even petabyte-scale uses. Upsolver has production use cases where customer’s ingest millions of events per second which are continuously processed and output into query-ready tables.

Cloud object storage

You can ingest files that you’re loading into Amazon S3, Azure Blob Storage and Google Cloud Storage through an non-Upsolver process. Creating an object store data source on Upsolver is very simple. On AWS, Upsolver will auto-detect the file format’s date pattern and show you a preview of the files to be ingested with their corresponding timestamps. It will also auto-detect when to start the ingestion from, based on activity.

You can also filter the files that are ingested by

- specifying a file name pattern

- specifying the content format and its associated content format options (e.g. custom delimiter for CSV)

- selecting a specific compute cluster

- selecting a target storage option

Databases via JDBC or Change Data Capture (CDC)

Cloud or on-prem relational databases such as MySQL, PostGresDB and RDS are supported through queries made via JDBC or via log-based CDC (recommended), whereby the database log is monitored and changes are ingested, with Upsolver automatically handling UPSERTs to ensure the output tables in your data lake or external data store (e.g. cloud data warehouse) remain strongly consistent with the source tables in the database.

Upsolver also automatically deals with data drift. It keeps data copies from diverging as fields change. It also allows you to replay data to reflect updates to your business logic without having to re-import the entire database.

CDC happens automatically and continuously, so you can:

- Accurately reflect changes to operational databases in near real-time reports.

- Dramatically reduce engineering time and resources spent on ETLs and lake data management.

- Easily address data privacy and GDPR requirements.